In the realm of artificial intelligence, the term “Explainable AI” or XAI has been gaining significant traction in recent years. As AI systems become increasingly integrated into our daily lives, it becomes essential to understand how they make decisions and why they do so. This is where Explainable AI steps in, offering transparency and comprehensibility in the complex world of machine learning.

The Black Box Dilemma

Imagine a scenario where an AI-driven loan application system denies your loan request. You are left wondering why the decision was made, as you have a good credit history and a stable income. In many traditional AI systems, the decision process is often a “black box,” meaning that the algorithms produce outcomes without providing any insight into how or why those decisions were reached. This lack of transparency has raised concerns and skepticism among both users and regulators.

The black box dilemma is not limited to loan approvals; it extends to various critical applications of AI, including medical diagnosis, autonomous vehicles, and criminal justice. In these contexts, understanding the rationale behind AI decisions is crucial for building trust and ensuring fairness.

Explainable AI: Shedding Light on the Black Box

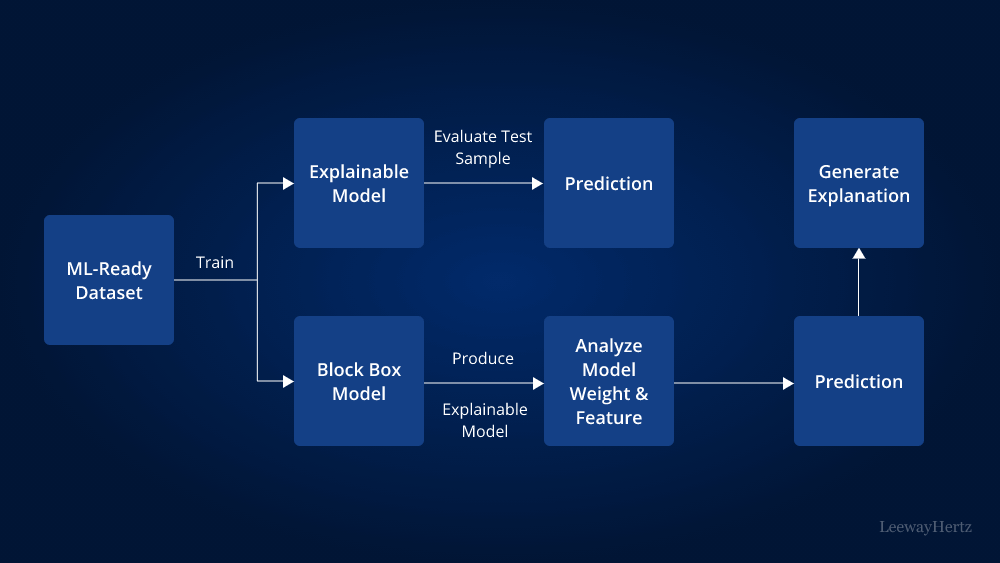

Explainable AI is the antidote to the black box problem. It is a set of techniques and tools designed to make AI systems more transparent and comprehensible. The primary goal of XAI is to provide insights into how AI models arrive at specific conclusions, allowing users and stakeholders to trust and verify the decisions made by these systems.

One of the key aspects of XAI is interpretability. Interpretability refers to the ability to explain AI decisions in a human-understandable manner. This involves generating explanations that are not just accurate but also concise and relevant to the specific context.

The Importance of Explainable AI

- Transparency: XAI promotes transparency in AI systems, enabling users to understand how decisions are made. This transparency is vital for building trust and accountability, especially in high-stakes domains like healthcare and finance.

- Accountability: When AI systems are explainable, it becomes easier to identify and rectify biases or errors in the models. This accountability is essential for ensuring fairness and preventing discrimination.

- User Trust: Explainable AI builds trust between users and AI systems. When individuals understand why a recommendation was made or a decision was reached, they are more likely to accept and trust the AI’s judgment.

- Regulatory Compliance: Many industries are subject to strict regulations and compliance requirements. XAI helps organizations demonstrate that their AI systems comply with these regulations by providing transparency into their decision-making processes.

- Debugging and Improvement: XAI tools can be used to diagnose and improve AI models. By understanding how an AI system reaches decisions, developers can identify and address issues in the model’s logic or training data.

Techniques of Explainable AI

Explainable AI encompasses various techniques and methodologies, each tailored to the specific needs of the application. Some common approaches include:

- Feature Importance: This technique identifies which features or input variables had the most significant impact on an AI model’s decision. It helps users understand which factors influenced the outcome.

- Local Interpretability: Local explanations focus on explaining a specific AI decision. Techniques like LIME (Local Interpretable Model-Agnostic Explanations) generate simplified models for individual predictions, shedding light on the factors that contributed to that decision.

- Global Interpretability: Global explanations aim to provide an overall understanding of an AI model’s behavior. Techniques like SHAP (SHapley Additive exPlanations) assign importance scores to features across the entire dataset, helping users grasp the model’s general trends.

- Rule-Based Explanations: Rule-based explanations express AI decisions as a set of if-then rules, making it easy for users to understand the logic behind each decision.

- Visual Explanations: Visualizations are powerful tools for explaining AI results. They can represent complex data and model behavior in a way that is accessible to non-experts.

Challenges in Implementing Explainable AI

While the benefits of Explainable AI are clear, implementing it can be challenging:

- Trade-offs: There can be trade-offs between model performance and explainability. Simplifying a model for better explanations may lead to a decrease in accuracy.

- Complexity: Some AI models, especially deep learning models, can be extremely complex, making it difficult to provide straightforward explanations.

- Privacy: In some cases, providing explanations may reveal sensitive or private information about individuals, raising privacy concerns.

- User Understanding: Ensuring that explanations are genuinely understandable by non-experts can be a significant challenge.

The Future of Explainable AI

As AI continues to advance and integrate into various aspects of our lives, Explainable AI will play an increasingly critical role. Researchers and developers are working diligently to overcome the challenges and create more accessible and transparent AI systems.

In the future, we can expect to see the widespread adoption of Explainable AI in sectors like healthcare, finance, and autonomous systems. Additionally, regulatory bodies will likely require organizations to demonstrate the explainability of their AI systems to ensure fairness and accountability.

In conclusion, Explainable AI is a powerful tool that holds the key to unlocking the black box of artificial intelligence. By providing transparency, accountability, and user trust, XAI is set to revolutionize the way we interact with AI systems, making them not just powerful but also understandable and ethical tools for the benefit of society. As the field of XAI continues to evolve, we can look forward to a future where AI decisions are no longer shrouded in mystery but are open to scrutiny and comprehension by all.