In the realm of artificial intelligence and machine learning, the term “neural networks” has become increasingly ubiquitous. These complex computational models, inspired by the human brain, have revolutionized various fields, from image and speech recognition to autonomous driving and healthcare. In this article, we will explore the fundamentals of neural networks, their architecture, and their significance in the modern world.

What Are Neural Networks?

Neural networks, often referred to as artificial neural networks (ANNs), are a subset of machine learning algorithms designed to mimic the functioning of the human brain. They are composed of interconnected nodes, known as neurons, which work together to process and analyze complex data. These networks are particularly well-suited for tasks that involve pattern recognition and can learn to make predictions or decisions based on data.

The Basic Structure of Neural Networks

At the heart of a neural network is its architecture, which consists of layers of neurons. The three main types of layers are:

- Input Layer: This layer receives the initial data or features to be processed. Each neuron in the input layer represents a specific feature of the input data.

- Hidden Layers: Between the input and output layers, there may be one or more hidden layers. These layers are responsible for performing complex computations and feature extraction. The number of hidden layers and neurons in each layer can vary depending on the complexity of the task.

- Output Layer: The output layer produces the final result or prediction of the neural network. The number of neurons in the output layer depends on the nature of the task. For instance, in a binary classification problem, there would typically be one neuron in the output layer, while multi-class classification may have multiple neurons.

How Neural Networks Learn

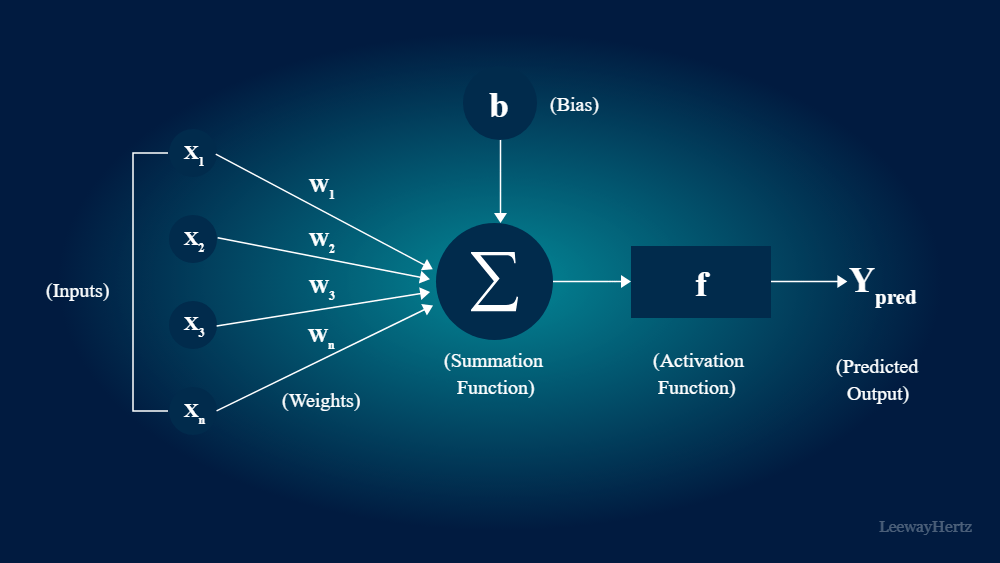

Neural networks learn through a process known as training. During training, the network is exposed to a labeled dataset, meaning that the input data is paired with the correct output or target. The network adjusts its internal parameters, known as weights and biases, to minimize the difference between its predictions and the actual targets. This process is achieved through a mathematical optimization technique called gradient descent.

The key to the success of neural networks lies in their ability to automatically learn complex patterns and representations from the data. This is achieved by iteratively adjusting the weights and biases in response to the errors made during predictions. Over time, the network becomes better at making accurate predictions, and its performance improves.

Applications of Neural Networks

The versatility of neural networks has led to their widespread adoption in a wide range of applications. Some notable applications include:

- Image Recognition: Convolutional Neural Networks (CNNs) have revolutionized image recognition tasks, such as object detection, facial recognition, and medical image analysis.

- Natural Language Processing (NLP): Recurrent Neural Networks (RNNs) and Transformer models have significantly improved language understanding, enabling applications like chatbots, language translation, and sentiment analysis.

- Autonomous Vehicles: Neural networks are essential components of self-driving cars, helping them perceive their surroundings, make decisions, and navigate safely.

- Healthcare: Neural networks are used for disease diagnosis, drug discovery, and predicting patient outcomes based on medical data.

- Finance: They are employed in fraud detection, stock market prediction, and risk assessment.

- Gaming: In the gaming industry, neural networks are used for character behavior, game testing, and creating intelligent opponents.

Challenges and Future Directions

While neural networks have achieved remarkable success, they are not without challenges. Some of the key issues include:

- Overfitting: Neural networks can sometimes perform exceptionally well on the training data but struggle to generalize to unseen data.

- Data Requirements: Large amounts of labeled data are often needed for effective training, which may not be readily available for all tasks.

- Interpretability: Understanding why a neural network makes a particular decision can be challenging, especially for complex models.

Looking to the future, researchers are exploring various techniques to address these challenges. Explainable AI (XAI) is an emerging field focused on making neural network decisions more interpretable. Additionally, advances in transfer learning and data augmentation are helping mitigate data scarcity issues.

Conclusion

Neural networks have emerged as a game-changer in the field of artificial intelligence and machine learning. Their ability to learn complex patterns and representations from data has paved the way for numerous innovative applications across various domains. While challenges persist, ongoing research and development are poised to further enhance the capabilities and reliability of neural networks, making them an indispensable tool for the future. As technology continues to evolve, the role of neural networks in shaping our world is only expected to grow, ushering in an era of unprecedented possibilities and advancements.