In the ever-evolving landscape of artificial intelligence (AI), the term “foundation models” has emerged as a buzzword that carries immense significance. These models, often characterized by their massive scale and exceptional capacity to understand and generate human-like text, have reshaped the AI ecosystem. In this article, we will delve into the world of foundation models, exploring their origins, capabilities, and the profound impact they are having on various domains.

The Genesis of Foundation Models

Foundation models are a product of the remarkable advancements in deep learning, particularly in the realm of natural language processing (NLP). The story begins with the development of neural networks, which are computational models inspired by the human brain. These networks, comprised of interconnected nodes, called neurons, became the backbone of modern AI systems.

One pivotal moment in the evolution of foundation models was the introduction of the Transformer architecture. This innovation, introduced in the paper “Attention Is All You Need” by Vaswani et al. in 2017, revolutionized NLP. Transformers incorporated attention mechanisms that allowed models to focus on relevant parts of input data, enabling them to outperform previous architectures in tasks like machine translation and text generation.

The Rise of GPT and BERT

Two of the most influential foundation models that emerged from the Transformer architecture are the Generative Pre-trained Transformer (GPT) and BERT (Bidirectional Encoder Representations from Transformers).

GPT, developed by OpenAI, gained prominence with its ability to generate coherent and contextually relevant text. GPT-3, the third iteration of this model, boasted a staggering 175 billion parameters, making it one of the largest foundation models at the time of its release. It demonstrated remarkable capabilities in various applications, from language translation to content generation.

BERT, on the other hand, pioneered the use of bidirectional context in pre-training language models. Developed by Google, BERT learned to understand the nuances of language by considering the context of words in both directions. This bidirectional approach significantly improved the model’s performance in a wide range of NLP tasks, such as sentiment analysis and question-answering.

Foundation Models in Action

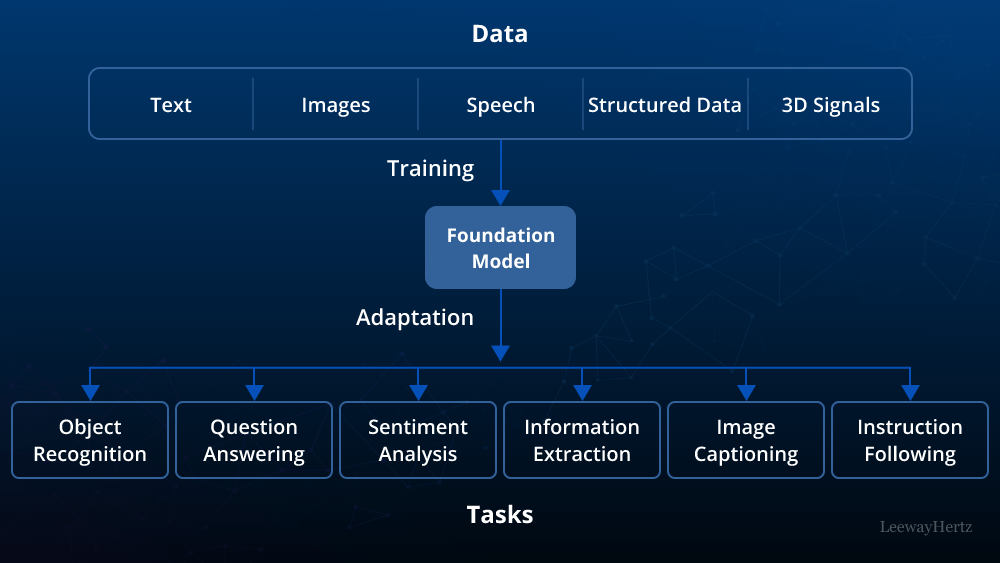

The true power of foundation models lies in their versatility. These models can be fine-tuned for specific tasks, allowing them to excel in a multitude of applications. Here are some notable examples:

- Language Translation: Foundation models have revolutionized machine translation. They can translate text between languages with remarkable accuracy, breaking down language barriers and facilitating global communication.

- Content Generation: Businesses are using foundation models to automate content creation. From writing news articles to generating product descriptions, these models produce high-quality content efficiently.

- Chatbots and Virtual Assistants: AI-powered chatbots and virtual assistants leverage foundation models to provide human-like interactions, offering assistance, answering queries, and even engaging in casual conversations.

- Healthcare: In the healthcare sector, foundation models assist in diagnosing diseases, analyzing medical records, and predicting patient outcomes, potentially saving lives through early detection and improved decision-making.

- Financial Analysis: Foundation models are utilized for sentiment analysis of financial news, predicting market trends, and risk assessment, aiding investors and financial institutions in decision-making processes.

Ethical Considerations and Challenges

While foundation models hold great promise, they also raise significant ethical concerns. These include:

- Bias and Fairness: Models trained on vast amounts of internet text may inherit biases present in the data. Efforts are ongoing to reduce bias and promote fairness in AI systems.

- Privacy: The sheer scale of foundation models raises concerns about user privacy, as they can inadvertently memorize and generate sensitive information. Ensuring data privacy is crucial.

- Environmental Impact: Training large models consumes substantial computational resources, contributing to environmental concerns. Research is ongoing to make AI training more sustainable.

- Regulation: Policymakers are grappling with how to regulate foundation models to ensure responsible AI development and use.

The Future of Foundation Models

Foundation models have already transformed industries and are poised to continue doing so in the future. The research community is actively working on developing even more capable models while addressing ethical and environmental concerns.

One exciting avenue is the development of multimodal models, which can process and generate text, images, and other data types simultaneously. This will open up new possibilities in content creation, understanding, and interaction.

In conclusion, foundation models represent a remarkable milestone in AI development. They are a testament to the rapid progress in deep learning and hold immense potential to drive innovation across various sectors. As we navigate the evolving landscape of AI, responsible development and ethical considerations will be vital in harnessing the full potential of these powerful models.