Artificial Intelligence (AI) has rapidly evolved, transforming the way we interact with technology and data. As AI becomes increasingly integrated into our daily lives and critical systems, the security of AI models becomes paramount. In this article, we will explore the concerns surrounding AI model security, best practices to mitigate these risks, and cutting-edge techniques to safeguard AI models.

Concerns in AI Model Security

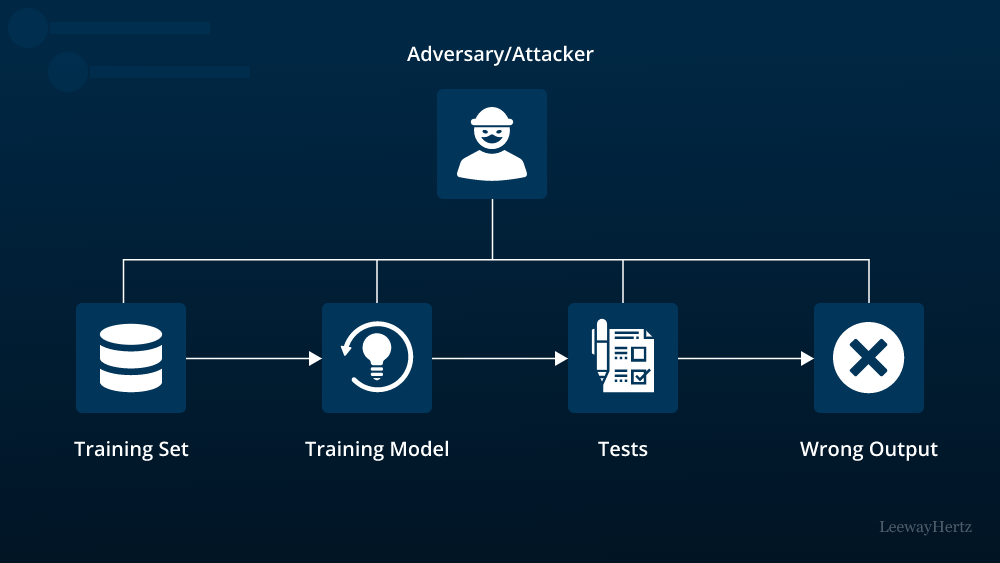

1. Adversarial Attacks

One of the primary concerns in AI model security is adversarial attacks. These attacks involve manipulating input data to deceive AI models into making incorrect predictions. Adversarial attacks can have significant consequences, especially in applications like autonomous vehicles, where a single incorrect decision can lead to catastrophic outcomes.

2. Data Privacy

AI models are often trained on vast datasets containing sensitive information. Privacy concerns arise when AI models inadvertently leak sensitive data or when malicious actors attempt to extract private information through model manipulation.

3. Model Theft

The theft of AI models is another growing concern. Attackers can steal proprietary models and use them for their own purposes, potentially leading to financial losses and a loss of competitive advantage for organizations.

4. Model Fairness and Bias

AI models can perpetuate biases present in training data, leading to unfair or discriminatory outcomes. Ensuring fairness in AI models is not just an ethical concern but also a legal one, as discriminatory models can lead to lawsuits and reputational damage.

Best Practices for AI Model Security

1. Robust Model Training

To defend against adversarial attacks, it’s crucial to incorporate robust training techniques. Adversarial training involves augmenting the training dataset with adversarial examples, forcing the model to learn from its vulnerabilities and become more resilient.

2. Data Privacy Measures

Implementing data privacy measures such as differential privacy can help protect sensitive information during model training. This technique adds noise to the training data to prevent the extraction of specific data points while still allowing the model to learn valuable patterns.

3. Model Encryption

To protect against model theft, encryption techniques can be applied. This involves encrypting the AI model, making it unreadable without the decryption key. Even if a malicious actor gains access to the model, they cannot use it without the proper decryption key.

4. Fairness Audits

To address fairness concerns, conducting fairness audits of AI models is essential. This involves examining the model’s performance across different demographic groups and making necessary adjustments to ensure fairness and reduce bias.

Cutting-Edge Techniques for AI Model Security

1. Federated Learning

Federated learning is a decentralized training approach that keeps data on user devices rather than centralizing it. This technique not only enhances data privacy but also mitigates the risk of data breaches during model updates.

2. Homomorphic Encryption

Homomorphic encryption allows computations to be performed on encrypted data without revealing the data itself. This technique can be applied to protect both input data and model parameters, ensuring end-to-end security.

3. Continuous Monitoring

Continuous monitoring of AI models in production is crucial for identifying and mitigating security threats. Employing anomaly detection algorithms can help detect unusual model behavior, signaling potential attacks or vulnerabilities.

4. AI for AI Security

Leveraging AI for AI security is an emerging trend. AI-powered systems can proactively identify and respond to security threats in real-time, providing an additional layer of protection against attacks.

Conclusion

AI model security is a multifaceted concern that requires careful consideration and proactive measures. As AI continues to advance, so do the threats against it. By implementing best practices and staying updated on cutting-edge security techniques, organizations can mitigate risks and ensure the integrity and trustworthiness of their AI models. Safeguarding AI model security is not just a technical challenge; it is an ethical and legal imperative that will shape the future of AI adoption across industries.